Systems that need to act fast on data, like fraud detection and live feeds, benefit a lot. This architecture helps them do so efficiently.

Using

event-driven architecture makes apps more agile and efficient. It helps businesses stay ahead in today’s fast world.

Introduction to Event-Driven Architecture

Event-driven architecture (EDA) is a way to design systems. It uses events like user actions to start changes. This method is key in today’s tech world for real-time info processing.

Definition of Event-Driven Architecture

Simply put, EDA focuses on events to trigger changes. These events help systems talk to each other smoothly. The design is flexible and works well in complex settings.

Importance in Modern Systems

Event-driven systems are vital today. They make systems fast, scalable, and easy to keep up. With cloud computing, they help systems grow and adapt well.

The use of

asynchronous communication makes systems strong and fast. This is a big plus for handling different loads.

Historical Context

Event-driven systems have grown a lot over time. They started with simple messages and have become complex today. This shows how far we’ve come in handling events.

Here’s a table of key moments in event-driven architecture’s history:

| Era | Development | Impact |

|---|

| 1960s-1970s | Introduction of basic messaging systems | Laid the foundation for signaling mechanisms |

| 1980s-1990s | Advancements in middleware technology | Better component integration and communication |

| 2000s-Present | Emergence of real-time and cloud-based EDA | Enhanced scalability, flexibility, and performance |

Core Concepts of Event-Driven Architecture

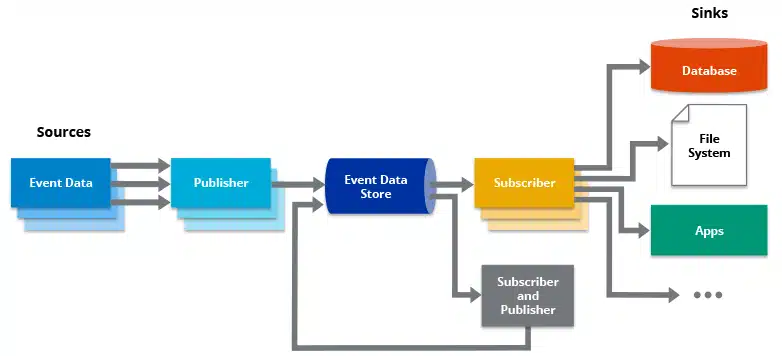

An event-driven architecture uses actions, or “events,” to guide data flow and communication. This method makes systems

react quickly to changes. It also makes them more flexible.

Events and Event Producers

Events and event producers are key in event-driven systems. Events are big changes, like a mouse click or a transaction. Event producers are things that start these events. They can be user interfaces,

IoT devices, or backend services.

Event Consumers

Event consumers are important in

event-driven programming. They listen for and act on events. This happens in an asynchronous way. It helps systems handle many scenarios without getting slow.

An example is a microservice that does something when certain events happen.

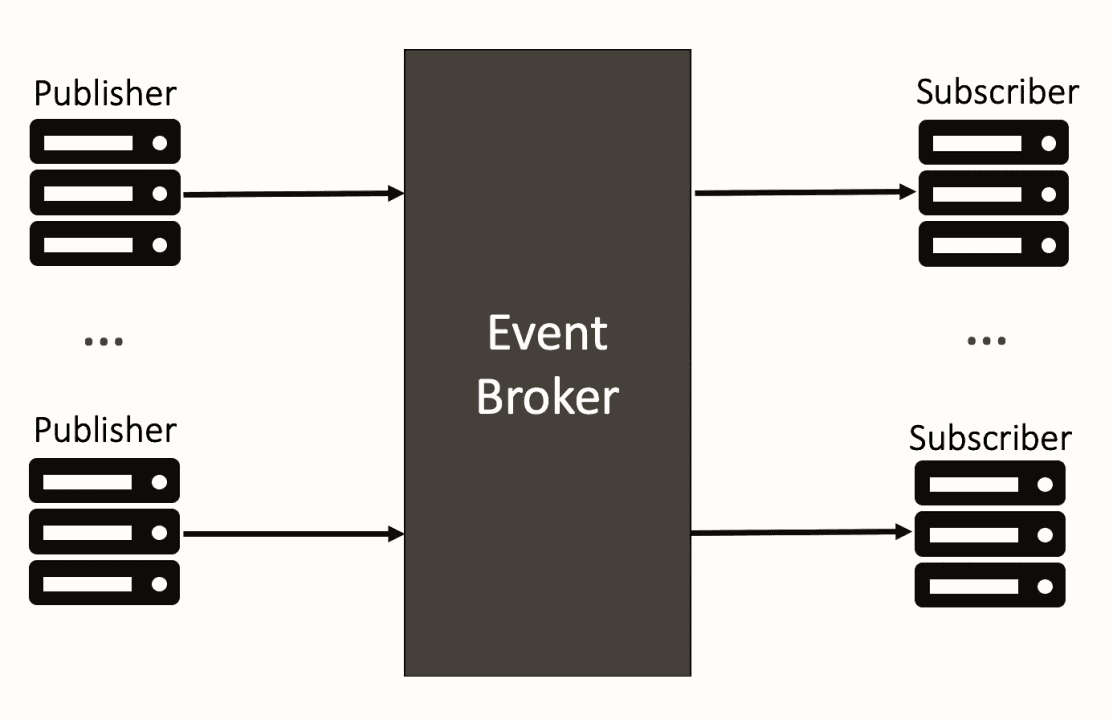

Event Routers and Brokers

Event routers and brokers are crucial for good event-driven systems. They make sure events get to the right consumers. Brokers like

Apache Kafka and

RabbitMQ help manage and send events. They make systems scalable and easy to keep up.

| Aspect | Description | Example |

|---|

| Event Producers | Entities that generate state-changing events | User interfaces, sensors |

| Event Consumers | Components that process and react to events | Microservices, event handlers |

| Event Routers and Brokers | Middleware that routes events to appropriate consumers | Apache Kafka, RabbitMQ |

How Event-Driven Architecture Works in Stream Processing

In today’s computing world,

event processing is key for quick

data analysis. It captures data streams from many sources. When events happen, they are processed right away to get insights or act fast.

Event streaming lets businesses use

real-time analytics. It treats data as a flow of events, not just records. This way, systems can handle lots of data quickly.

Each piece of data has its own story, helping in deep analysis and quick actions. This keeps the system informed between events, giving better insights.

Companies like Netflix and Uber use this to improve user experiences. They offer quick updates and alerts, making things better for users and work flow.

Learning about

event streaming shows its big role in today’s data handling and quick processing.

Asynchronous Communication in Event-Driven Systems

In event-driven systems,

asynchronous communication is key. It lets different parts work together smoothly without needing to respond right away. This way, systems can handle lots of data well and fast, perfect for today’s data-heavy apps.

Decoupling Components

Event-driven systems are great because they can work independently. This makes them more modular and better at handling problems. Each part can grow and change without slowing down the whole system, making it stronger.

Handling High Volume of Events

These systems are also good at dealing with lots of data.

Asynchronous communication helps them keep up with lots of data without slowing down. This is key for apps that need to handle huge amounts of data quickly and keep it flowing smoothly.

Let’s see how

asynchronous communication and a

decoupled system help systems work better and grow:

| Feature | Benefit |

|---|

| Asynchronous Communication | Allows independent operation and non-blocking data exchange |

| Decoupled System | Enhances modularity and facilitates independent scaling |

| High Throughput Handling | Efficiently processes large volumes of events without degradation |

Real-Time Event Processing

Real-time event processing is key in today’s digital world. It lets businesses act fast when events happen. This ensures quick action in urgent situations.

This part talks about the main points of

real-time event processing. We focus on how to keep things fast and handle more data.

Latency Considerations

Latency is a big deal in

real-time event processing. Systems need to be fast to handle events right away. This means using smart ways to cut down on wait times.

Tools like

Apache Kafka and Amazon Kinesis help manage lots of data quickly. They make sure data-driven actions happen on time.

Scalability Challenges

As systems get bigger, scaling them up is harder. They must handle more data without slowing down. Ways to do this include adding more servers and using cloud services.

Cloud services like

AWS Lambda adjust resources as needed. Having good monitoring tools is also key. They help find and fix problems fast.

| Aspect | Strategy |

|---|

| Latency | Efficient event handling, streamlined communication protocols |

| Scalability | Horizontal scaling, cloud-based solutions |

Benefits of Event-Driven Architecture

Event-driven architecture brings many benefits. It makes systems better and more flexible. The biggest plus is its

scalable architecture. This means systems can grow with business needs without a hitch.

It also offers a

responsive design. This lets businesses quickly adapt to new market trends and customer wants. It ensures a smooth user experience, no matter the changes.

Using event-driven architecture also makes it easy to use microservices. Microservices split big apps into smaller parts. These parts can be worked on and updated separately, making systems more flexible and strong.

These systems are great for handling real-time data. Companies get quick insights for better decision-making. This helps in offering custom services and boosting work efficiency.

In short, event-driven systems mix

scalable architecture and

responsive design. This helps businesses stay quick and strong in today’s fast-paced world.

Challenges and Solutions in Event-Driven Design

Event-driven architecture has its own set of challenges. It’s hard to manage transactions and keep data consistent because systems are spread out. It’s also key to handle errors well to keep the system running smoothly.

Complexity in Implementation

One big challenge is making

event-driven design work. It’s tough to handle many processes at once and keep data right across different systems. To solve this, using microservices and event routers helps a lot. Also, frameworks that support event sourcing make things easier.

Handling Failures and Errors

It’s very important to handle errors well in event-driven systems. If one part fails, it can affect others. Using good monitoring tools and logging helps find and fix problems fast. Also, having systems that keep messages safe even when things

go wrong is crucial. Engineers should make sure the system can try again and fall back if needed.

| Challenge | Solution |

|---|

| Complex Implementation | Use microservices architecture and event sourcing frameworks |

| Error Handling | Implement comprehensive monitoring, event logging, and resilient messaging systems |

Use Cases of Event-Driven Architecture in Stream Processing

Event-driven architecture (EDA) is changing many fields. It lets systems handle and react to live data right away. In finance, EDA helps spot fraud fast, keeping things safe and secure.

In e-commerce, EDA makes shopping better for everyone. It quickly uses user data to suggest things, making shopping more fun and selling more stuff.

Smart cities get a lot from EDA too. It helps manage things like traffic and energy use. This makes cities better places to live, with less traffic and more safety.

Healthcare devices use EDA to watch over patients and alert doctors quickly. This fast action can save lives and make patients healthier.

In short, EDA is making a big difference everywhere. It helps finance, e-commerce, smart cities, and healthcare. By using EDA and real-time data, systems can act fast and smart, leading to big wins.

Tools and Technologies for Event-Driven Systems

In the fast-changing world of event-driven systems, picking the right tools is key. Important parts include event brokers,

event processing libraries, and tools for monitoring and management. These work together to make sure data is processed smoothly and systems run well.

Popular Event Brokers

Event brokers help move data between producers and consumers.

Apache Kafka and

RabbitMQ are two top choices. Apache Kafka is great for handling lots of data quickly.

RabbitMQ is good for reliable delivery and complex routes.

Event Processing Libraries

Developers use strong

event processing libraries to build apps.

ReactiveX is a top library for working with event streams. It helps create complex workflows for handling events in real-time.

Monitoring and Management Tools

Keeping an eye on event-driven systems needs good observability tools.

Prometheus and

Grafana are leaders in this area. Prometheus watches events in real-time with its database. Grafana adds dynamic visuals. Together, they help systems run smoothly and reliably.

| Tool | Function | Key Features |

|---|

| Apache Kafka | Event Broker | High throughput, low latency |

| RabbitMQ | Event Broker | Reliable delivery, complex routing |

| ReactiveX | Event Processing Library | Stream manipulation, transformation |

| Prometheus | Monitoring Tool | Real-time event monitoring |

| Grafana | Visualization Tool | Dynamic visualizations |

Event-Driven Messaging Patterns

In event-driven architecture, the messaging pattern you choose is key. It affects how well services talk to each other. There are two main patterns: point-to-point and

Pub/Sub. Each fits different needs and situations.

Point-to-Point Messaging

Point-to-point messaging means a direct link between one sender and one receiver. It makes sure each message goes to just one place. This keeps things simple and reliable.

“Point-to-point messaging guarantees the delivery of messages to one consumer, enhancing clarity in the communication process.”

With point-to-point messaging, systems can make sure important tasks get done right. It’s great when you need specific services to handle tasks without confusion.

Publish/Subscribe Model

The

Pub/Sub model lets messages go to many listeners. Publishers send messages to a topic. Then, subscribers get messages from topics they care about.

“The Pub/Sub model promotes loose coupling and scalability by allowing multiple consumers to process messages simultaneously.”

This model is different because it lets many services get the same message. It’s good for growing and changing systems.

Both patterns are important in event-driven architecture. You might use both together. This way, you get a system that’s strong, efficient, and can grow.

Best Practices in Event-Driven Programming

Making event-driven systems work well is key. One big step is to see events as important parts of the system. This means events should be clear and fit well within their own area.

It’s also vital to make sure events are processed correctly. This means they should not change if they are processed more than once. Also, all parts of an event should work together as a whole. This is especially true in systems that are spread out, where events might get duplicated.

Using domain-driven design (DDD) is a good way to model events. DDD helps create a system that mirrors real-world business operations. This makes systems easier to grow and keep up with.

To make systems better and easier to fix, add lots of logging and tools to watch the system. Good logging helps find where problems are. Tools for watching the system give insights into how it’s doing. Together, they help you keep your event-driven systems running smoothly.

- Designing events as first-class citizens

- Ensuring idempotency and atomicity in event processing

- Applying domain-driven design for accurate event modeling

- Incorporating comprehensive logging and observability

Following these best practices helps developers make the most of event-driven systems. They create systems that can handle complex tasks well and in real-time.

Event Streaming vs. Traditional Batch Processing

In the world of

data processing, knowing the difference between

event streaming and

traditional batch processing is key. It helps choose the best method for each situation.

Event streaming deals with data as it comes in, letting companies act fast. This is important for situations where quick action is needed.

On the other hand,

traditional batch processing works with big chunks of data at once. It’s good for handling lots of data but might be slow for urgent needs.

- Real-Time Processing: Event streaming is always on, while batch processing waits for a set time.

- Latency: Event streaming is quick, but batch processing can be slower because of big data sets.

- Volume and Frequency: Batch processing is great for big data all at once. Event streaming is for lots of small data points.

- Scalability: Event streaming grows with more data, but batch processing needs strong systems for big loads.

Event streaming is best for needs that require quick analysis and action. Batch processing is better for handling big data where speed isn’t as critical.

Future Trends in Event-Driven Architecture

The world of event-driven architecture is changing fast. This is thanks to new tech in

machine learning and more

IoT devices. These changes are making event processing and

predictive analytics better.

Advancements in Machine Learning

Machine learning is making event-driven systems smarter. It helps process data in real-time and predict things. By using

machine learning, we can analyze data better and make quicker decisions.

This is really useful in areas like automated trading. Here, making fast and accurate choices is key.

Integration with IoT Devices

IoT devices are creating a lot of data. Event-driven architecture is great at handling this data. It makes sure all the data from IoT devices is managed well.

This is very important for systems that use IoT a lot. It helps them work better and communicate smoothly.

| Technology | Role in Event-Driven Architecture | Benefits |

|---|

| IoT | Generates massive streams of data | Enables real-time processing and communication |

| Machine Learning | Facilitates predictive analytics | Enhances decision-making and event processing |

As these trends grow, event-driven architecture will get even better. It will be more flexible and meet the changing needs of today’s systems.

Conclusion

Event-driven architecture is a big step forward in system design. It helps with real-time data and lets parts talk to each other in their own time. This makes systems easier to grow and handle lots of data.

This design is great for companies that need to be quick and flexible. In today’s fast world, it’s very useful.

Event-driven systems are also getting better with new tech. They will soon work even better with things like machine learning and IoT. This will make them key in many areas.

So, event-driven architecture is very useful and will only get better. It’s key for modern businesses to manage data well. As it grows, it will help systems work even better.

In the fast-changing world of event-driven systems, picking the right tools is key. Important parts include event brokers, event processing libraries, and tools for monitoring and management. These work together to make sure data is processed smoothly and systems run well.

In the fast-changing world of event-driven systems, picking the right tools is key. Important parts include event brokers, event processing libraries, and tools for monitoring and management. These work together to make sure data is processed smoothly and systems run well.

In the fast-changing world of event-driven systems, picking the right tools is key. Important parts include event brokers, event processing libraries, and tools for monitoring and management. These work together to make sure data is processed smoothly and systems run well.

In the fast-changing world of event-driven systems, picking the right tools is key. Important parts include event brokers, event processing libraries, and tools for monitoring and management. These work together to make sure data is processed smoothly and systems run well.